A super-easy way to record, search and compare AI experiments.

Project description

An easy-to-use & supercharged open-source experiment tracker

Aim logs your training runs, enables a beautiful UI to compare them and an API to query them programmatically.

About • Features • Demos • Examples • Quick Start • Documentation • Roadmap • Discord Community • Twitter

About Aim

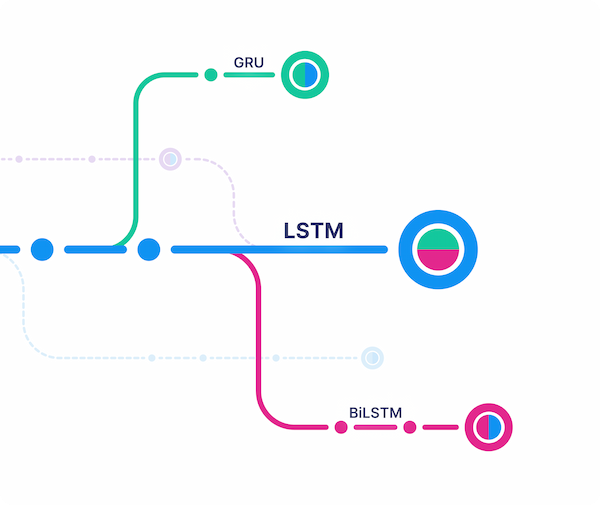

| Track and version ML runs | Visualize runs via beautiful UI | Query runs metadata via SDK |

|---|---|---|

|

|

|

Aim is an open-source, self-hosted ML experiment tracking tool. It's good at tracking lots (1000s) of training runs and it allows you to compare them with a performant and beautiful UI.

You can use not only the great Aim UI but also its SDK to query your runs' metadata programmatically. That's especially useful for automations and additional analysis on a Jupyter Notebook.

Aim's mission is to democratize AI dev tools.

Why use Aim?

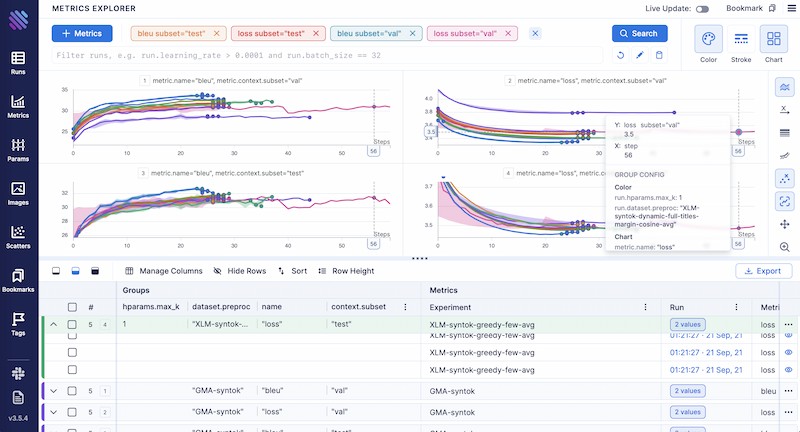

Compare 100s of runs in a few clicks - build models faster

- Compare, group and aggregate 100s of metrics thanks to effective visualizations.

- Analyze, learn correlations and patterns between hparams and metrics.

- Easy pythonic search to query the runs you want to explore.

Deep dive into details of each run for easy debugging

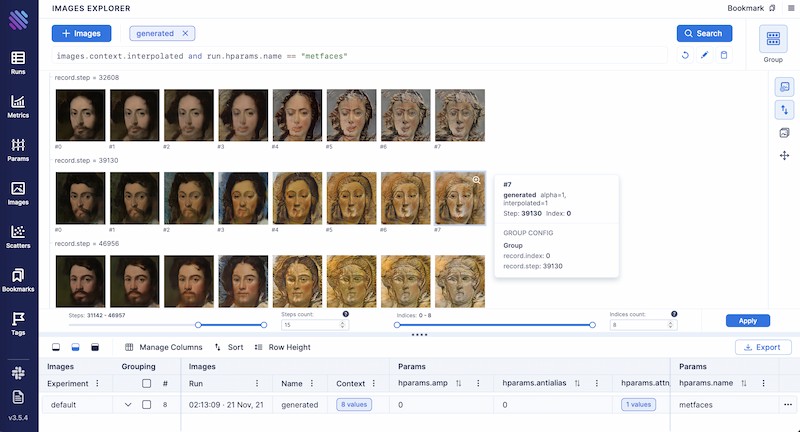

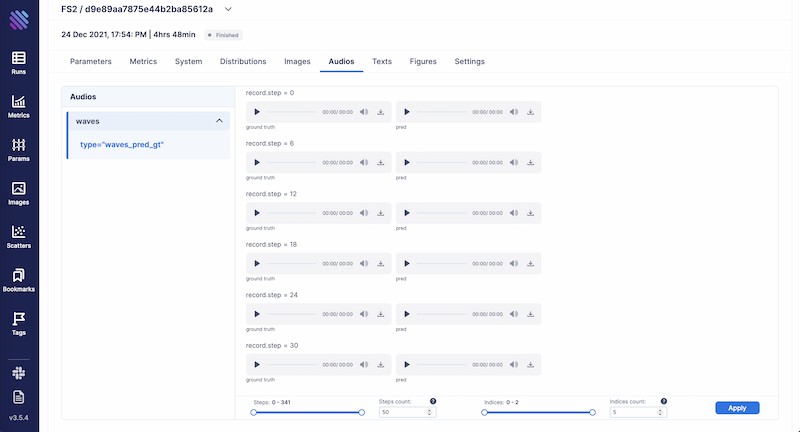

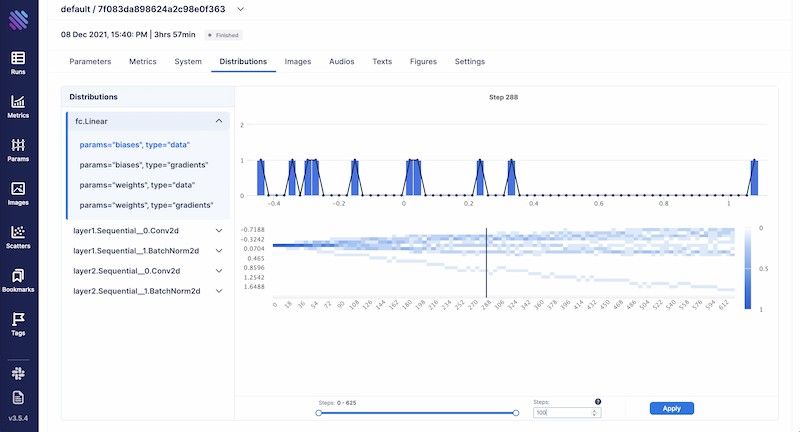

- Hyperparameters, metrics, images, distributions, audio, text - all available at hand on an intuitive UI to understand the performance of your model.

- Easily track plots built via your favourite visualisation tools, like plotly and matplotlib.

- Analyze system resource usage to effectively utilize computational resources.

Have all relevant information organised and accessible for easy governance

- Centralized dashboard to holistically view all your runs, their hparams and results.

- Use SDK to query/access all your runs and tracked metadata.

- You own your data - Aim is open source and self hosted.

Demos

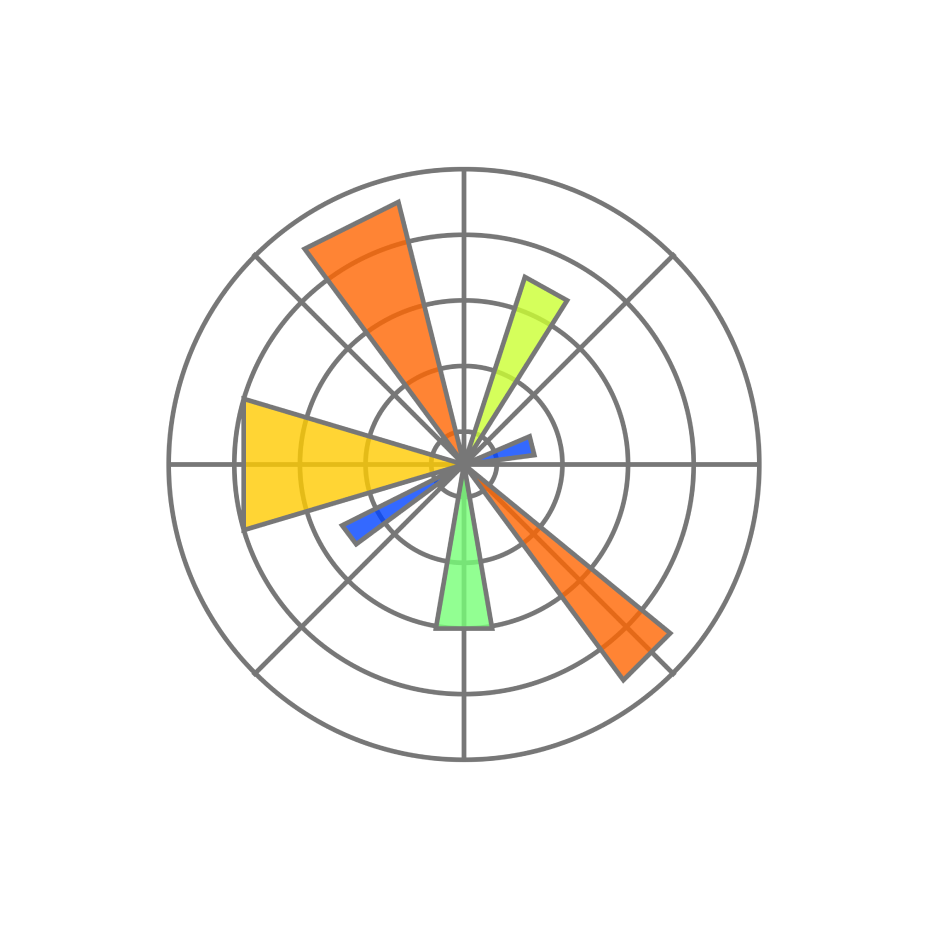

| Machine translation | lightweight-GAN |

|---|---|

|

|

| Training logs of a neural translation model(from WMT'19 competition). | Training logs of 'lightweight' GAN, proposed in ICLR 2021. |

| FastSpeech 2 | Simple MNIST |

|---|---|

|

|

| Training logs of Microsoft's "FastSpeech 2: Fast and High-Quality End-to-End Text to Speech". | Simple MNIST training logs. |

Quick Start

Follow the steps below to get started with Aim.

1. Install Aim on your training environment

pip3 install aim

2. Integrate Aim with your code

from aim import Run

# Initialize a new run

run = Run()

# Log run parameters

run["hparams"] = {

"learning_rate": 0.001,

"batch_size": 32,

}

# Log metrics

for i in range(10):

run.track(i, name='loss', step=i, context={ "subset":"train" })

run.track(i, name='acc', step=i, context={ "subset":"train" })

See the full list of supported trackable objects(e.g. images, text, etc) here.

3. Run the training as usual and start Aim UI

aim up

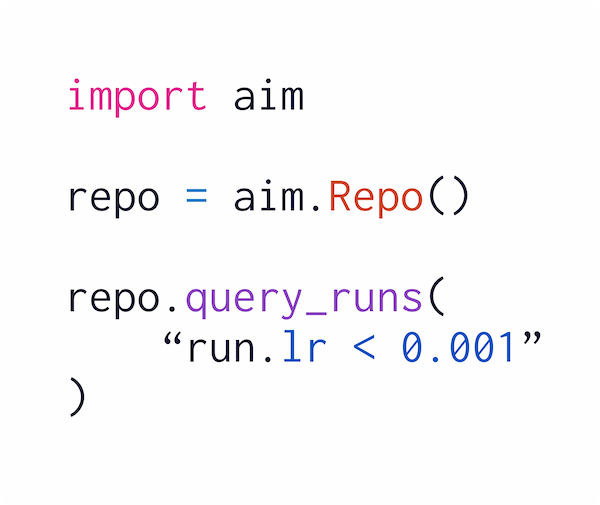

4. Or query runs programmatically via SDK

from aim import Repo

my_repo = Repo('/path/to/aim/repo')

query = "metric.name == 'loss'" # Example query

# Get collection of metrics

for run_metrics_collection in my_repo.query_metrics(query).iter_runs():

for metric in run_metrics_collection:

# Get run params

params = metric.run[...]

# Get metric values

steps, metric_values = metric.values.sparse_numpy()

Integrations

Integrate PyTorch Lightning

from aim.pytorch_lightning import AimLogger

# ...

trainer = pl.Trainer(logger=AimLogger(experiment='experiment_name'))

# ...

See documentation here.

Integrate Hugging Face

from aim.hugging_face import AimCallback

# ...

aim_callback = AimCallback(repo='/path/to/logs/dir', experiment='mnli')

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset if training_args.do_train else None,

eval_dataset=eval_dataset if training_args.do_eval else None,

callbacks=[aim_callback],

# ...

)

# ...

See documentation here.

Integrate Keras & tf.keras

import aim

# ...

model.fit(x_train, y_train, epochs=epochs, callbacks=[

aim.keras.AimCallback(repo='/path/to/logs/dir', experiment='experiment_name')

# Use aim.tensorflow.AimCallback in case of tf.keras

aim.tensorflow.AimCallback(repo='/path/to/logs/dir', experiment='experiment_name')

])

# ...

See documentation here.

Integrate KerasTuner

from aim.keras_tuner import AimCallback

# ...

tuner.search(

train_ds,

validation_data=test_ds,

callbacks=[AimCallback(tuner=tuner, repo='.', experiment='keras_tuner_test')],

)

# ...

See documentation here.

Integrate XGBoost

from aim.xgboost import AimCallback

# ...

aim_callback = AimCallback(repo='/path/to/logs/dir', experiment='experiment_name')

bst = xgb.train(param, xg_train, num_round, watchlist, callbacks=[aim_callback])

# ...

See documentation here.

Integrate CatBoost

from aim.catboost import AimLogger

# ...

model.fit(train_data, train_labels, log_cout=AimLogger(loss_function='Logloss'), logging_level="Info")

# ...

See documentation here.

Integrate fastai

from aim.fastai import AimCallback

# ...

learn = cnn_learner(dls, resnet18, pretrained=True,

loss_func=CrossEntropyLossFlat(),

metrics=accuracy, model_dir="/tmp/model/",

cbs=AimCallback(repo='.', experiment='fastai_test'))

# ...

See documentation here.

Integrate LightGBM

from aim.lightgbm import AimCallback

# ...

aim_callback = AimCallback(experiment='lgb_test')

aim_callback.experiment['hparams'] = params

gbm = lgb.train(params,

lgb_train,

num_boost_round=20,

valid_sets=lgb_eval,

callbacks=[aim_callback, lgb.early_stopping(stopping_rounds=5)])

# ...

See documentation here.

Integrate PyTorch Ignite

from aim.pytorch_ignite import AimLogger

# ...

aim_logger = AimLogger()

aim_logger.log_params({

"model": model.__class__.__name__,

"pytorch_version": str(torch.__version__),

"ignite_version": str(ignite.__version__),

})

aim_logger.attach_output_handler(

trainer,

event_name=Events.ITERATION_COMPLETED,

tag="train",

output_transform=lambda loss: {'loss': loss}

)

# ...

See documentation here.

Comparisons to familiar tools

Tensorboard

Training run comparison

Order of magnitude faster training run comparison with Aim

- The tracked params are first class citizens at Aim. You can search, group, aggregate via params - deeply explore all the tracked data (metrics, params, images) on the UI.

- With tensorboard the users are forced to record those parameters in the training run name to be able to search and compare. This causes a super-tedius comparison experience and usability issues on the UI when there are many experiments and params. TensorBoard doesn't have features to group, aggregate the metrics

Scalability

- Aim is built to handle 1000s of training runs - both on the backend and on the UI.

- TensorBoard becomes really slow and hard to use when a few hundred training runs are queried / compared.

Beloved TB visualizations to be added on Aim

- Embedding projector.

- Neural network visualization.

MLFlow

MLFlow is an end-to-end ML Lifecycle tool. Aim is focused on training tracking. The main differences of Aim and MLflow are around the UI scalability and run comparison features.

Run comparison

- Aim treats tracked parameters as first-class citizens. Users can query runs, metrics, images and filter using the params.

- MLFlow does have a search by tracked config, but there are no grouping, aggregation, subplotting by hyparparams and other comparison features available.

UI Scalability

- Aim UI can handle several thousands of metrics at the same time smoothly with 1000s of steps. It may get shaky when you explore 1000s of metrics with 10000s of steps each. But we are constantly optimizing!

- MLflow UI becomes slow to use when there are a few hundreds of runs.

Weights and Biases

Hosted vs self-hosted

- Weights and Biases is a hosted closed-source MLOps platform.

- Aim is self-hosted, free and open-source experiment tracking tool.

Roadmap

Detailed Sprints

:sparkle: The Aim product roadmap

- The

Backlogcontains the issues we are going to choose from and prioritize weekly - The issues are mainly prioritized by the highly-requested features

High-level roadmap

The high-level features we are going to work on the next few months

Done

- Live updates (Shipped: Oct 18 2021)

- Images tracking and visualization (Start: Oct 18 2021, Shipped: Nov 19 2021)

- Distributions tracking and visualization (Start: Nov 10 2021, Shipped: Dec 3 2021)

- Jupyter integration (Start: Nov 18 2021, Shipped: Dec 3 2021)

- Audio tracking and visualization (Start: Dec 6 2021, Shipped: Dec 17 2021)

- Transcripts tracking and visualization (Start: Dec 6 2021, Shipped: Dec 17 2021)

- Plotly integration (Start: Dec 1 2021, Shipped: Dec 17 2021)

- Colab integration (Start: Nov 18 2021, Shipped: Dec 17 2021)

- Centralized tracking server (Start: Oct 18 2021, Shipped: Jan 22 2022)

- Tensorboard adaptor - visualize TensorBoard logs with Aim (Start: Dec 17 2021, Shipped: Feb 3 2022)

- Track git info, env vars, CLI arguments, dependencies (Start: Jan 17 2022, Shipped: Feb 3 2022)

- MLFlow adaptor (visualize MLflow logs with Aim) (Start: Feb 14 2022, Shipped: Feb 22 2022)

- Activeloop Hub integration (Start: Feb 14 2022, Shipped: Feb 22 2022)

- PyTorch-Ignite integration (Start: Feb 14 2022, Shipped: Feb 22 2022)

- Run summary and overview info(system params, CLI args, git info, ...) (Start: Feb 14 2022, Shipped: Mar 9 2022)

- Add DVC related metadata into aim run (Start: Mar 7 2022, Shipped: Mar 26 2022)

- Ability to attach notes to Run from UI (Start: Mar 7 2022, Shipped: Apr 29 2022)

- Fairseq integration (Start: Mar 27 2022, Shipped: Mar 29 2022)

- LightGBM integration (Start: Apr 14 2022, Shipped: May 17 2022)

- CatBoost integration (Start: Apr 20 2022, Shipped: May 17 2022)

- Run execution details(display stdout/stderr logs) (Start: Apr 25 2022, Shipped: May 17 2022)

- Long sequences(up to 5M of steps) support (Start: Apr 25 2022, Shipped: Jun 22 2022)

- Figures Explorer (Start: Mar 1 2022, Shipped: Aug 21 2022)

- Notify on stuck runs (Start: Jul 22 2022, Shipped: Aug 21 2022)

- Integration with KerasTuner (Start: Aug 10 2022, Shipped: Aug 21 2022)

- Integration with WandB (Start: Aug 15 2022, Shipped: Aug 21 2022)

- Stable remote tracking server (Start: Jun 15 2022, Shipped: Aug 21 2022)

- Integration with fast.ai (Start: Aug 22 2022, Shipped: Oct 6 2022)

- Integration with MXNet (Start: Sep 20 2022, Shipped: Oct 6 2022)

- Project overview page (Start: Sep 1 2022, Shipped: Oct 6 2022)

- Remote tracking server scaling (Start: Sep 11 2022, Shipped: Nov 26 2022)

- Integration with PaddlePaddle (Start: Oct 2 2022, Shipped: Nov 26 2022)

- Integration with Optuna (Start: Oct 2 2022, Shipped: Nov 26 2022)

- Audios Explorer (Start: Oct 30 2022, Shipped: Nov 26 2022)

- Experiment page (Start: Nov 9 2022, Shipped: Nov 26 2022)

In Progress

- Aim SDK low-level interface (Start: Aug 22 2022, )

- HuggingFace datasets (Start: Dec 29 2022, )

To Do

Aim UI

- Runs management

- Runs explorer – query and visualize runs data(images, audio, distributions, ...) in a central dashboard

- Explorers

- Text Explorer

- Distributions Explorer

- Dashboards – customizable layouts with embedded explorers

SDK and Storage

- Scalability

- Smooth UI and SDK experience with over 10.000 runs

- Runs management

- CLI interfaces

- Reporting - runs summary and run details in a CLI compatible format

- Manipulations – copy, move, delete runs, params and sequences

- CLI interfaces

Integrations

- ML Frameworks:

- Shortlist: MONAI, SpaCy, Raytune

- Resource management tools

- Shortlist: Kubeflow, Slurm

- Workflow orchestration tools

- Others: Hydra, Google MLMD, Streamlit, ...

On hold

- scikit-learn integration

- Cloud storage support – store runs blob(e.g. images) data on the cloud (Start: Mar 21 2022)

- Artifact storage – store files, model checkpoints, and beyond (Start: Mar 21 2022)

Community

If you have questions

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file aim-3.16.2.tar.gz.

File metadata

- Download URL: aim-3.16.2.tar.gz

- Upload date:

- Size: 1.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.10.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e3d7d54af91f7a8f9767f9437a9aeaae79f729935bc7c0d90cc779213fd23182

|

|

| MD5 |

f33d50ca06a64a30ba3d8b761caec6f4

|

|

| BLAKE2b-256 |

497f332b355cd55cf3d0a34556381b1490289e1f12626799b1bca11d458f4b25

|

File details

Details for the file aim-3.16.2-cp311-cp311-manylinux_2_24_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp311-cp311-manylinux_2_24_x86_64.whl

- Upload date:

- Size: 5.7 MB

- Tags: CPython 3.11, manylinux: glibc 2.24+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

83c27101142f084bc5e1e3984a146af3676bfeda3914bf33c975e4b5e19f61be

|

|

| MD5 |

7d6c705103eddc4daf46c4b70c711341

|

|

| BLAKE2b-256 |

f73084aea975b74af23aa46d878a7a785d741ffaea992c7ff38baff1e7015939

|

File details

Details for the file aim-3.16.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 7.2 MB

- Tags: CPython 3.11, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

49f2fe224e42c4a05c4ad877f3cadefa81a373dad09d4e082b711abe13795d5d

|

|

| MD5 |

5243fa2368d3202453556f7cb2d7f569

|

|

| BLAKE2b-256 |

a33bca88925f4fc1930accdb6b24e766d221beb2088a3d2c6e04e4b9c6380229

|

File details

Details for the file aim-3.16.2-cp311-cp311-macosx_11_0_arm64.whl.

File metadata

- Download URL: aim-3.16.2-cp311-cp311-macosx_11_0_arm64.whl

- Upload date:

- Size: 2.3 MB

- Tags: CPython 3.11, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e795c490fb3317c6d8c94a96fc59a2fd64ab2905059b01c878aeb51e5181699e

|

|

| MD5 |

fb3524483591c41f4cb71a3c74cf747a

|

|

| BLAKE2b-256 |

79e8c647a0be3060425e9bfc7f5e7b1e393c618e193fd1ffd89d464f83f07502

|

File details

Details for the file aim-3.16.2-cp311-cp311-macosx_10_14_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp311-cp311-macosx_10_14_x86_64.whl

- Upload date:

- Size: 2.4 MB

- Tags: CPython 3.11, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3ac704fe61f7c8c7c53956c8f23aa2fac7fde01bd24df55f10982b1eeb0435bf

|

|

| MD5 |

8c6c056feea52d36184ebe0809662e69

|

|

| BLAKE2b-256 |

185629e2b68c23fc8bdc9334cdad6633e5f6c8cf410cbeb2b7e95aed6587b859

|

File details

Details for the file aim-3.16.2-cp310-cp310-manylinux_2_24_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp310-cp310-manylinux_2_24_x86_64.whl

- Upload date:

- Size: 5.6 MB

- Tags: CPython 3.10, manylinux: glibc 2.24+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5dcf7df79077d3484b495c29248816803f87baf9c53f4da10f01d30e5ef92d08

|

|

| MD5 |

77646c4ae8b5e8f267c72959ec08ce4e

|

|

| BLAKE2b-256 |

e15d42ee243402398ade67d9e105bb7b531fa4d40b8383581135286b309c1c9c

|

File details

Details for the file aim-3.16.2-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 6.7 MB

- Tags: CPython 3.10, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

628d3035b9bb7f7448190cdc21cc11cbfc99dab58150667528d9e6c104baf080

|

|

| MD5 |

648f583e3d7fc527a5db6c9ec2cd0149

|

|

| BLAKE2b-256 |

348d25b19972d93f6b03fa4e0c937b80a10c0056d9deca8c663a5249203fb865

|

File details

Details for the file aim-3.16.2-cp310-cp310-macosx_11_0_arm64.whl.

File metadata

- Download URL: aim-3.16.2-cp310-cp310-macosx_11_0_arm64.whl

- Upload date:

- Size: 2.4 MB

- Tags: CPython 3.10, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.10.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

815249817292fede727d4fab976b13f95dce163391d289f0b734d61776151748

|

|

| MD5 |

b26110a0b994049daaeeb452808dd7cd

|

|

| BLAKE2b-256 |

a68f5bc0a4bce4c74a9a4f19815010cb708d72021f375b2b831364093f5fa007

|

File details

Details for the file aim-3.16.2-cp310-cp310-macosx_10_14_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp310-cp310-macosx_10_14_x86_64.whl

- Upload date:

- Size: 2.5 MB

- Tags: CPython 3.10, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.10.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3e5a712ae589653655f235d35b0dee807cddd9a09395072b9bd8782559583f1a

|

|

| MD5 |

5c94326a757a2a1b64a902ae53d8a3eb

|

|

| BLAKE2b-256 |

99756949dc4b12347b1499638cee7cce2dbb24e76f24738472d46578f84b1e9b

|

File details

Details for the file aim-3.16.2-cp39-cp39-manylinux_2_24_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp39-cp39-manylinux_2_24_x86_64.whl

- Upload date:

- Size: 5.6 MB

- Tags: CPython 3.9, manylinux: glibc 2.24+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e4660983fc8e7855b8e923dec209a9ddbc7718cab2c3bf27420f98c44daf24d2

|

|

| MD5 |

a211b232d26034245f2adb16a55e6c5a

|

|

| BLAKE2b-256 |

1173fc2a747128be80ab3c09ca202614615a225c52291eb1e792904f807e4620

|

File details

Details for the file aim-3.16.2-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 6.7 MB

- Tags: CPython 3.9, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8648eb3f3a848fc88a91590c1dea3d2905326b2f708a155d3b72442f8c593cc0

|

|

| MD5 |

13a4389f17ffb1852fd6a93bde009df0

|

|

| BLAKE2b-256 |

f74aa3610590e0be28e25612c8753612ea47d18e6e73692926b34112bb61eac0

|

File details

Details for the file aim-3.16.2-cp39-cp39-macosx_11_0_arm64.whl.

File metadata

- Download URL: aim-3.16.2-cp39-cp39-macosx_11_0_arm64.whl

- Upload date:

- Size: 2.3 MB

- Tags: CPython 3.9, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a59935528d2d0f8f53d1b0de43bb32b67740ee169b999d7046ab5740d8ac41e1

|

|

| MD5 |

d23549a8a961c04e02717947c42328cd

|

|

| BLAKE2b-256 |

9ad995465645f866620b9a535cb06964be29bd2263489c7d56d3a02e127de725

|

File details

Details for the file aim-3.16.2-cp39-cp39-macosx_10_14_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp39-cp39-macosx_10_14_x86_64.whl

- Upload date:

- Size: 2.4 MB

- Tags: CPython 3.9, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

46f028ff5aaa2176b913355fcbe92a7a9a8f30e3c9004c0d8ff6ea83d3bc50fc

|

|

| MD5 |

f88cac88a18aa6969434b89c3e0301a5

|

|

| BLAKE2b-256 |

b7a4b6825744bd5b36658a87287ba54e86c1fed1d8f14f039612c7c94dc4a69b

|

File details

Details for the file aim-3.16.2-cp38-cp38-manylinux_2_24_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp38-cp38-manylinux_2_24_x86_64.whl

- Upload date:

- Size: 5.8 MB

- Tags: CPython 3.8, manylinux: glibc 2.24+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

09642c162b77a4149c0a7293f1a5e490a67fa830fb24f781d63a7c869c352cfa

|

|

| MD5 |

65850ef55e43290f0bb12102d4847d67

|

|

| BLAKE2b-256 |

249fe4ee374353162970f47a2dd9affac71f267a14213b9f9fb6868411b04743

|

File details

Details for the file aim-3.16.2-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 6.8 MB

- Tags: CPython 3.8, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

32f9904533a08def4cbcd41d3bdcab1bf52008d6812743703fafdcee893d9bc9

|

|

| MD5 |

b5320a8b05797df539df817d5d7fd482

|

|

| BLAKE2b-256 |

6128a77ae2dd63f1f0ed93a884a08a9a19e5c418549c64b537bbed807146efb2

|

File details

Details for the file aim-3.16.2-cp38-cp38-macosx_11_0_arm64.whl.

File metadata

- Download URL: aim-3.16.2-cp38-cp38-macosx_11_0_arm64.whl

- Upload date:

- Size: 2.4 MB

- Tags: CPython 3.8, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.8.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7f8632d1cc0bcf29b3c7884716a20d676eb7a9d106aef0f001f4c1747a95394f

|

|

| MD5 |

31ed56d1247ea0b2305414adf590c3c4

|

|

| BLAKE2b-256 |

2495f1abc6eab26c091550dd9b219bb8a282d84831368f5ac777436b0c3c2783

|

File details

Details for the file aim-3.16.2-cp38-cp38-macosx_10_14_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp38-cp38-macosx_10_14_x86_64.whl

- Upload date:

- Size: 2.5 MB

- Tags: CPython 3.8, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.8.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dc333bb0d8da603da76b9e56ffebb1485ea49aed896589e28376e6d18aec3fea

|

|

| MD5 |

420877dbc52abb414c23e4a403ee1d14

|

|

| BLAKE2b-256 |

568bfd14fd61f2a72d31c44ea18314eebffdcfdb626389643f8b65611710b97e

|

File details

Details for the file aim-3.16.2-cp37-cp37m-manylinux_2_24_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp37-cp37m-manylinux_2_24_x86_64.whl

- Upload date:

- Size: 5.5 MB

- Tags: CPython 3.7m, manylinux: glibc 2.24+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3591bf467433c1b4ed3666c16d8049131523dc6526137a290f21d9990cf66401

|

|

| MD5 |

4fea77ccab933b43d0ffdff512547801

|

|

| BLAKE2b-256 |

a332db05f89dfb163bd5cecd6b00c15f972df75bc777115ecc1da375835d4b48

|

File details

Details for the file aim-3.16.2-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 6.4 MB

- Tags: CPython 3.7m, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

618b7c93fc7448b16682014ef72b08d42a4934011896ba77f8d706c9968d1d7c

|

|

| MD5 |

d4e9375ed06ddb7adc4a861271154786

|

|

| BLAKE2b-256 |

1b94f63f3c882a86bd24b3cd07bcd77649032e61af1c17641cf0e38c71ab3586

|

File details

Details for the file aim-3.16.2-cp37-cp37m-macosx_10_14_x86_64.whl.

File metadata

- Download URL: aim-3.16.2-cp37-cp37m-macosx_10_14_x86_64.whl

- Upload date:

- Size: 2.5 MB

- Tags: CPython 3.7m, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.7.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

904311c9e56ed6a503a277fe3827da97e74ab20e238bf8a7fe59a9abd32f4266

|

|

| MD5 |

d789f1a94600b7e1e81d67387debf30c

|

|

| BLAKE2b-256 |

b5019f352b881632b92dff9e9d7e8debb8c0ed7b2b1bb4afb83f6b9fe4936196

|