A query language for language models.

Project description

LMQL

A query language for programming (large) language models.

Documentation »

Explore Examples

·

Playground IDE

·

Report Bug

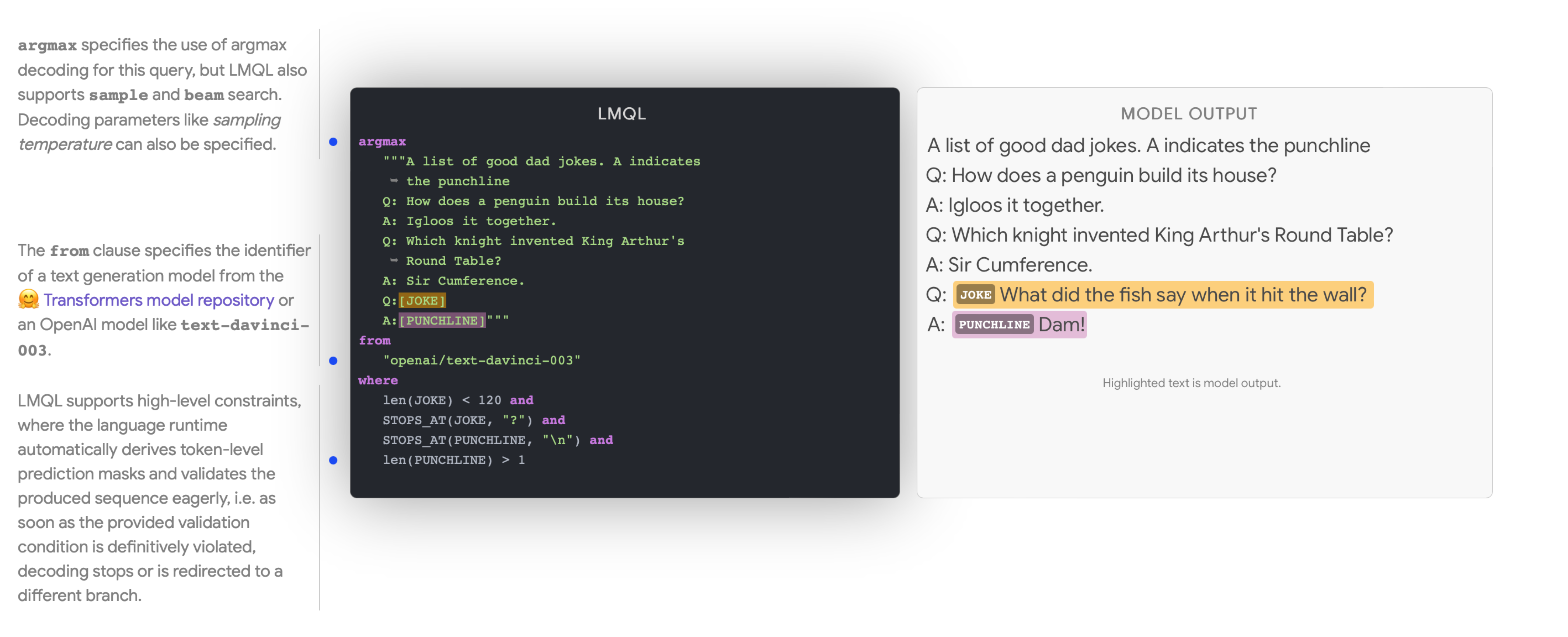

LMQL is a query language for large language models (LLMs). It facilitates LLM interaction by combining the benefits of natural language prompting with the expressiveness of Python. With only a few lines of LMQL code, users can express advanced, multi-part and tool-augmented LM queries, which then are optimized by the LMQL runtime to run efficiently as part of the LM decoding loop.

Example of a simple LMQL program.

Getting Started

To install the latest version of LMQL run the following command with Python >=3.10 installed.

pip install lmql

Local GPU Support: If you want to run models on a local GPU, make sure to install LMQL in an environment with a GPU-enabled installation of PyTorch >= 1.11 (cf. https://pytorch.org/get-started/locally/).

Running LMQL Programs

After installation, you can launch the LMQL playground IDE with the following command:

lmql playground

Using the LMQL playground requires an installation of Node.js. If you are in a conda-managed environment you can install node.js via

conda install nodejs=14.20 -c conda-forge. Otherwise, please see the official Node.js website https://nodejs.org/en/download/ for instructions how to install it on your system.

This launches a browser-based playground IDE, including a showcase of many exemplary LMQL programs. If the IDE does not launch automatically, go to http://localhost:3000.

Alternatively, lmql run can be used to execute local .lmql files. Note that when using local HuggingFace Transformers models in the Playground IDE or via lmql run, you have to first launch an instance of the LMQL Inference API for the corresponding model via the command lmql serve-model.

Configuring OpenAI API Credentials

If you want to use OpenAI models, you have to configure your API credentials. To do so, create a file api.env in the active working directory, with the following contents.

openai-org: <org identifier>

openai-secret: <api secret>

For system-wide configuration, you can also create an api.env file at $HOME/.lmql/api.env or at the project root of your LMQL distribution (e.g. src/ in a development copy).

Setting Up a Development Environment

To setup a conda environment for local LMQL development with GPU support, run the following commands:

# prepare conda environment

conda env create -f scripts/conda/requirements.yml -n lmql

conda activate lmql

# registers the `lmql` command in the current shell

source scripts/activate-dev.sh

Operating System: The GPU-enabled version of LMQL was tested to work on Ubuntu 22.04 with CUDA 12.0 and Windows 10 via WSL2 and CUDA 11.7. The no-GPU version (see below) was tested to work on Ubuntu 22.04 and macOS 13.2 Ventura or Windows 10 via WSL2.

Development without GPU

This section outlines how to setup an LMQL development environment without local GPU support. Note that LMQL without local GPU support only supports the use of API-integrated models like openai/text-davinci-003. Please see the OpenAI API documentation (https://platform.openai.com/docs/models/gpt-3-5) to learn more about the set of available models.

To setup a conda environment for LMQL with GPU support, run the following commands:

# prepare conda environment

conda env create -f scripts/conda/requirements-no-gpu.yml -n lmql-no-gpu

conda activate lmql-no-gpu

# registers the `lmql` command in the current shell

source scripts/activate-dev.sh

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lmql-0.0.6.3.tar.gz.

File metadata

- Download URL: lmql-0.0.6.3.tar.gz

- Upload date:

- Size: 698.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

94fc1a40b6ac59c3c744d9b7c692d42378d0cead70aeb29c16331bfafcf4273b

|

|

| MD5 |

2d7242ba74926292d817f70e293189e2

|

|

| BLAKE2b-256 |

765b8b26552c7bee713dc0a389c4ff4b41f7d34b17cbd7d73f0d5db6f4b8248f

|

File details

Details for the file lmql-0.0.6.3-py3-none-any.whl.

File metadata

- Download URL: lmql-0.0.6.3-py3-none-any.whl

- Upload date:

- Size: 615.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4c25c8d2393629b8afdefb9b51fd95e5ffc046224f35d614a7e756c4a0c1ad98

|

|

| MD5 |

afb8c725a121ad139d644262855b305a

|

|

| BLAKE2b-256 |

99910db9ebe1edc846627ffad195e1e0c7e6127d9cde0fd9302ff19c9c8b4d2b

|